Agentic AIPropTechReact NativeDesign Engineering

ALON — Always On

Agentic property assistant, from search to possession

Scroll

TheProblem

Buying or renting property in Pune is an endurance sport. You fill the same filter panel across five portals, get the same five listings back, and the moment you enquire on one, your number is on a loop of broker calls selling you whatever needs to move this month. Trythat.ai had already fixed the discovery problem with AI-driven listings. What was missing was someone to actually do the work: keep searching after you close the tab, remember what you care about, pull up market data when you need to negotiate, and stay out of the way when you don't.

Buyers repeated the same search across 99acres, MagicBricks, IndExTap, and WhatsApp broker lists, with no memory between sessions

A single enquiry leaked a phone number to five different agents. Users stopped enquiring to stop the calls

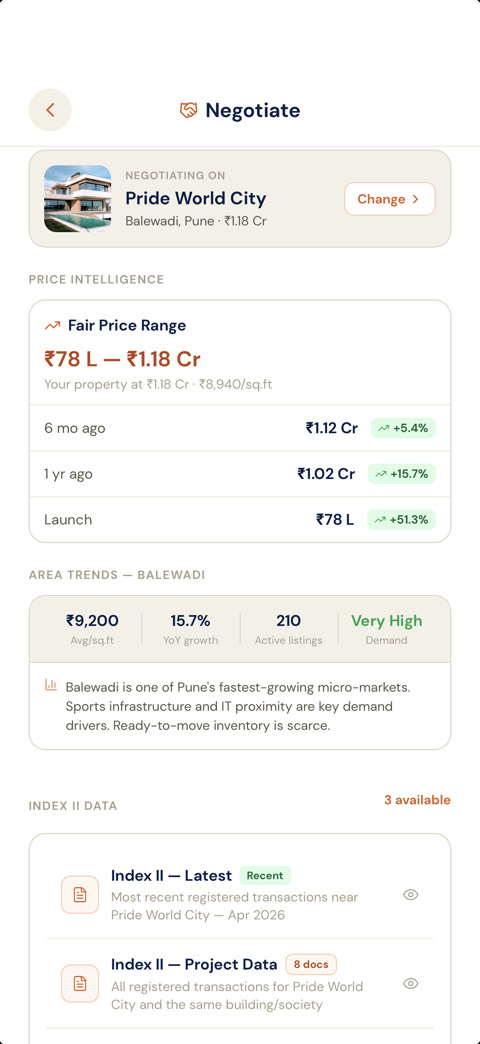

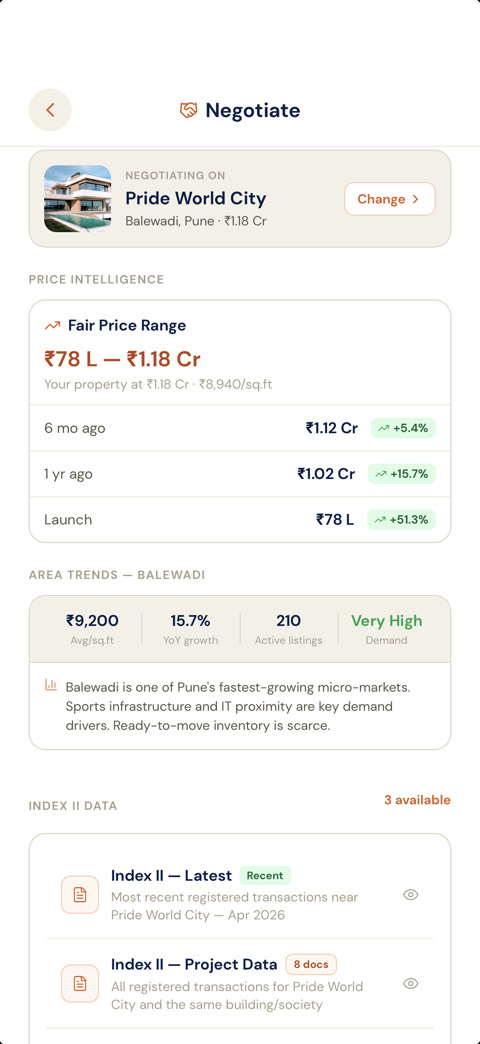

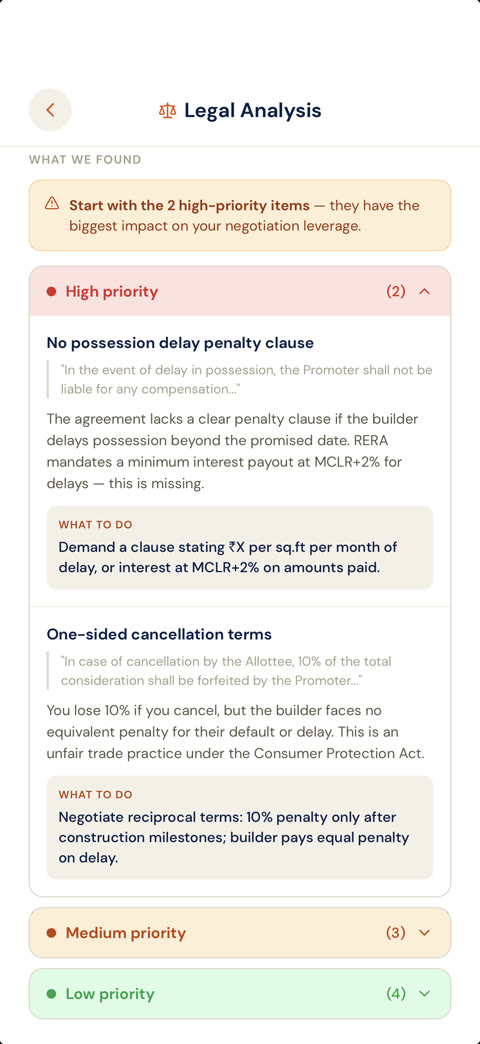

Market data, RERA status, builder history, and negotiation benchmarks lived in separate places and rarely made it into a decision

The property journey has nine real stages from search to possession, but every existing tool only covers one or two

02

TheTransformation

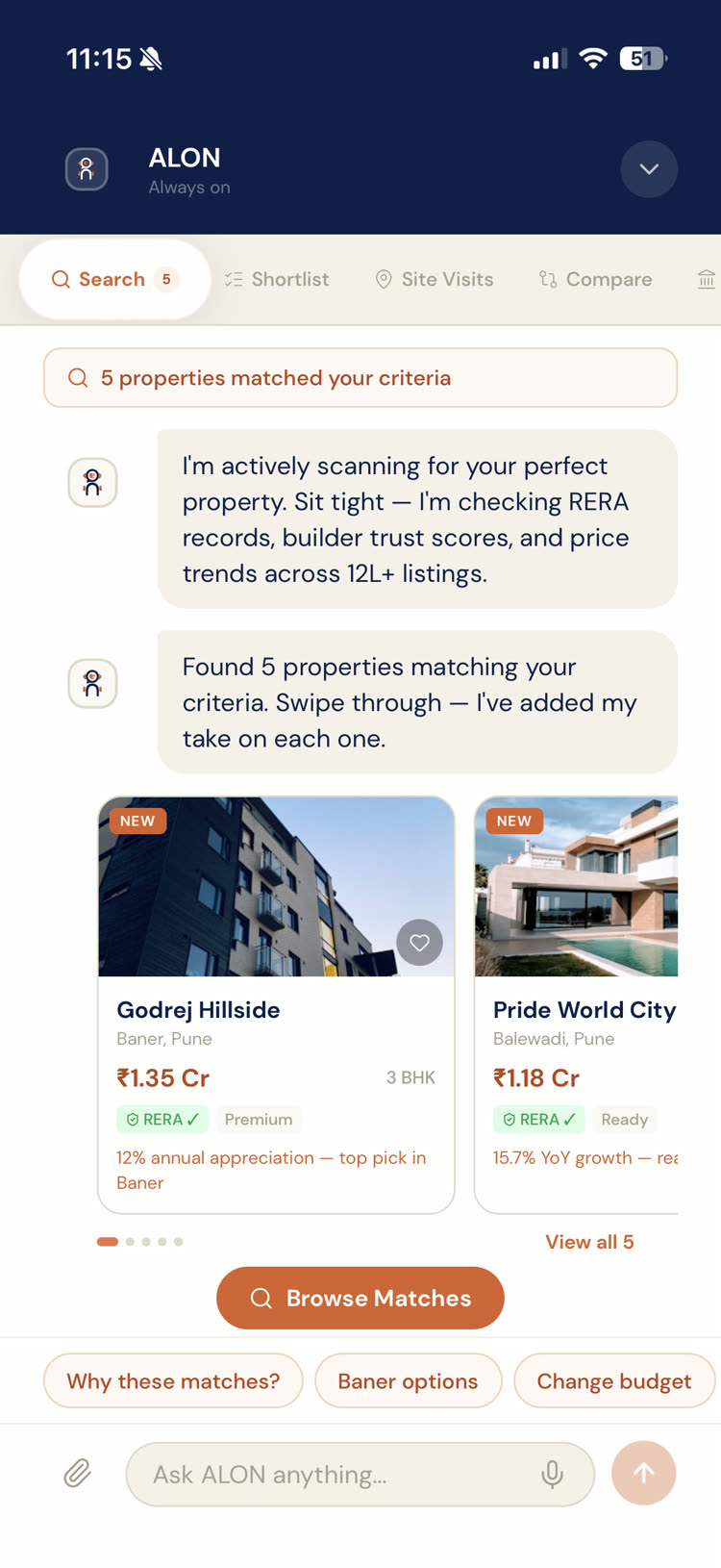

ALON started as a separate app. A few weeks in we pulled it back into Trythat.ai as the conversational layer instead of shipping it standalone. One product, one account, one place where search results and a working agent live side by side. The shift also reframed the design problem: ALON isn't a chat feature bolted onto a listings app. ALON is the product, and the listings are what it shows you while it works.

The Problem

The existing flow expected buyers to set filters, scroll through listings, and restart the same search every session. Phone numbers leaked on the first enquiry, triggering a loop of broker calls. No memory, no progress, no one working on your behalf between sessions.

The Insight

“An agent you talk to once is a chatbot. An agent that keeps working while you sleep is a different product. Every screen had to answer the same question: what is ALON doing for me right now?”

New Direction

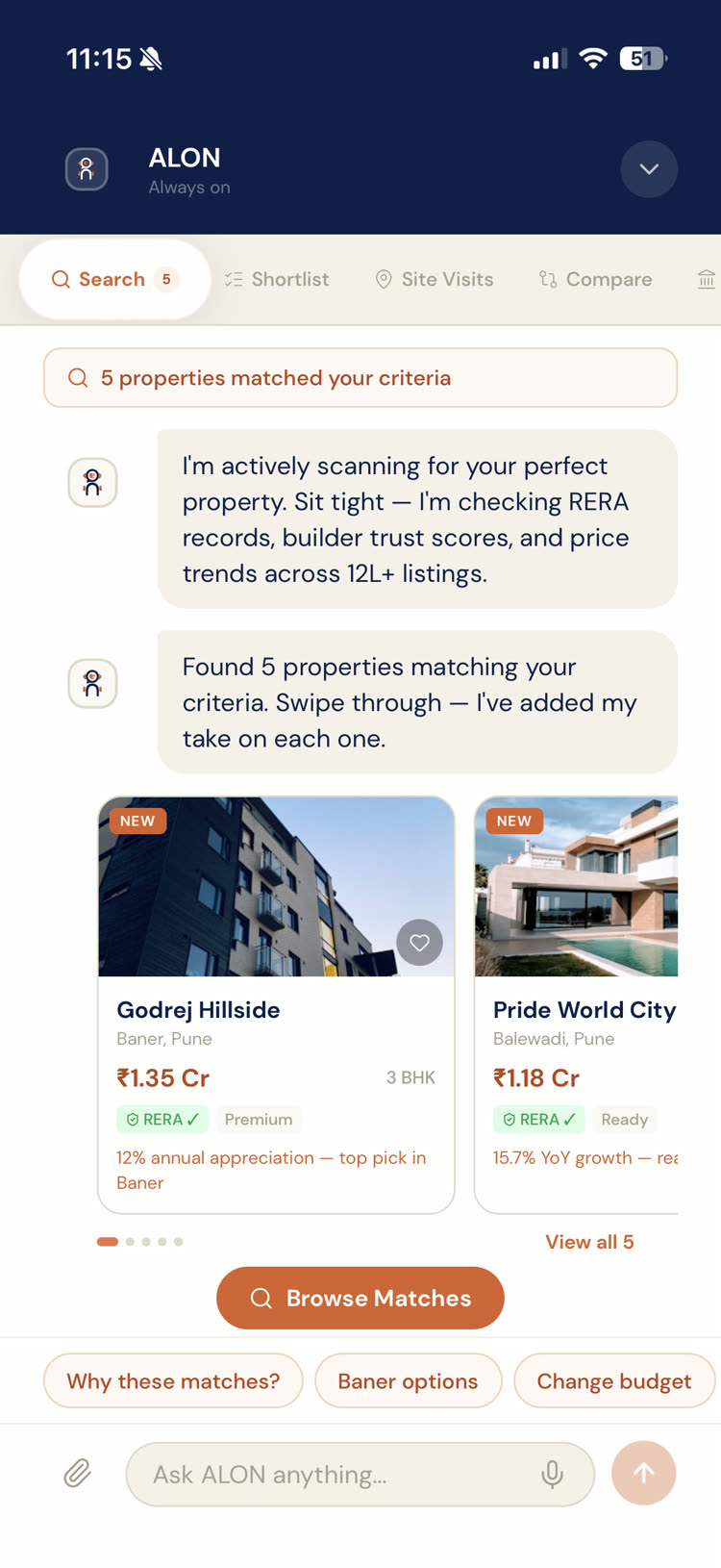

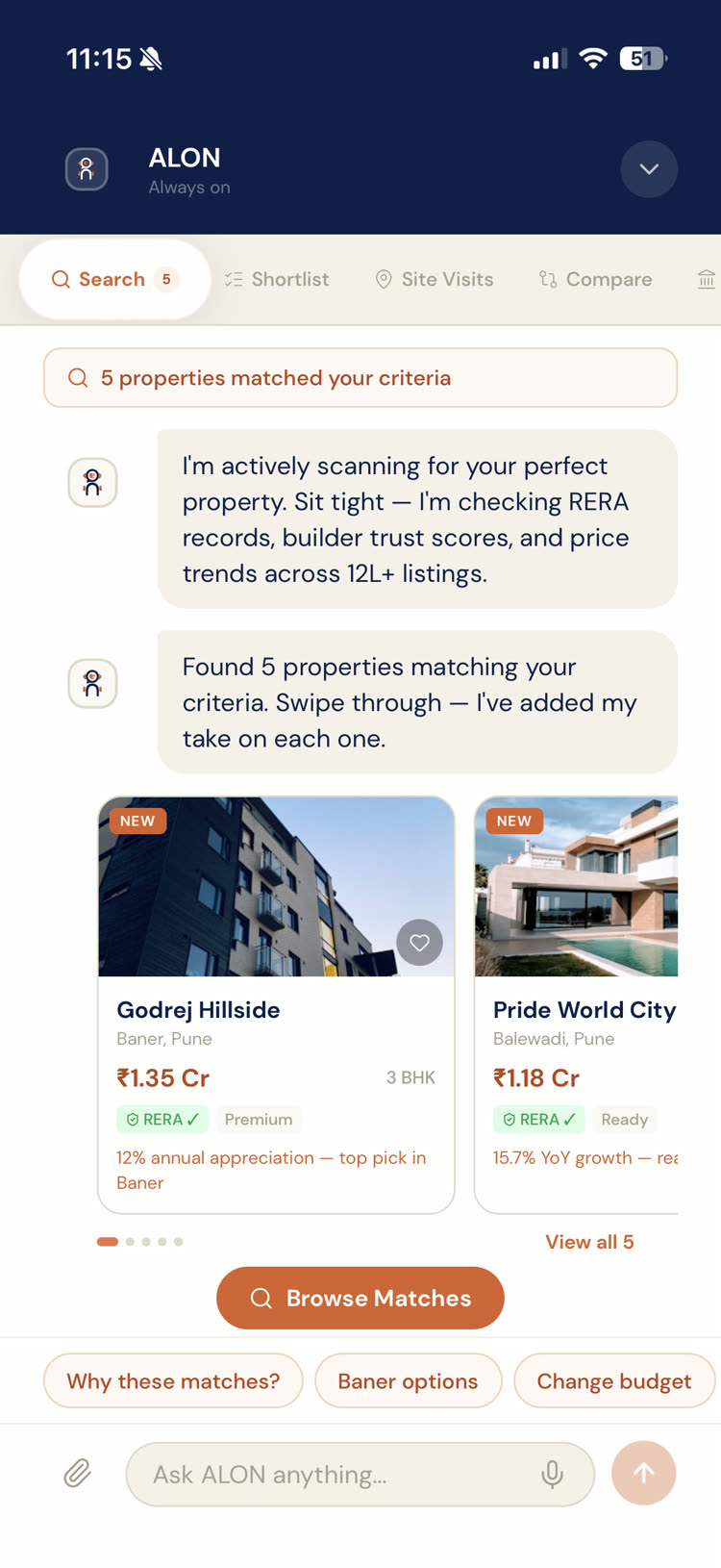

ALON scanning properties and surfacing matches with trust scores, RERA status, and appreciation data — all inside a stage-aware chat that remembers where you are in the buying journey.

An AI-native process, honestly

I didn't design this in Figma and hand it off. I designed it in code, on a phone, in roughly a month. The loop looked like this: PM and I agreed on a feature; I sketched the flow on paper to make sure it solved something real; I described the screen to Claude Code in the terminal — tokens, behavior, gesture, state — and iterated until the feel was right; I reviewed with PM, then walked into the CEO's office and handed him my phone. When he approved, developers pulled from the ALON repo and merged the screens into the UAT codebase with their backend wiring. Same React Native + Expo stack as Trythat.ai, so no rewrite at the boundary.

- Research: ChatGPT for synthesis, Smashing Magazine and Medium for agentic UX patterns. Not a research team, but enough signal to make the right choices

- Primary tool: Claude Code in the terminal. Every screen, animation, and interaction was described in words, generated in code, then refined against the running app

- Design system: defined once in constants/theme.ts — colors, type, spacing, radius, shadows. No Figma tokens, no drift, no translation step

- Empty states were the exception: I still opened Figma for those, because thinking through 'nothing here yet' is a spatial problem that benefits from a canvas

- Expo Go on my own device meant the prototype was the deliverable. The CEO, PM, and QA all reviewed the real app, not screenshots

Feature Deep Dive

Bringingthelogotolife

Marketing handed me the ALON wordmark. I asked for it as SVG on purpose — I wanted to decompose the character and animate it in code, without losing shape at any scale. The reference was Disney: caring, curious, quietly intelligent, occasionally funny. The point was to make the agent feel present without a dashboard of status indicators telling you it was working. I sketched a few passes on paper — what it does when it's idle, when you tap it, when you ignore it — then described each behaviour to Claude Code. What came out is a five-layer SVG with independent spring timelines. It breathes. Its ears flick at different intervals. Double-tap it and it jumps with a hand wave. Tap it five times in a row and it says 'Bhai, chill.' The entire animation lives in one reusable React Native component, portable across every screen that needs a sign of life.

- Five animated SVG layers (head, ears, hands, body, accent) with independent Reanimated shared values

- Entrance choreography: 900ms spring sequence, each layer delayed so the character assembles on screen

- Idle loop: head bob, body breath, ear flicks, subtle sway — all running in parallel, never static

- Touch reactions: tap squishes, double-tap jumps with a hand wave, long-press stretches then snaps back

- Speech bubble with personality pool — friendly greetings early, funny ones after repeat taps, reset at eleven

- Haptics wired to every reaction. The avatar feels present because it answers back in your hand, not just on screen

User flow — 5 steps

05

Whatshipped

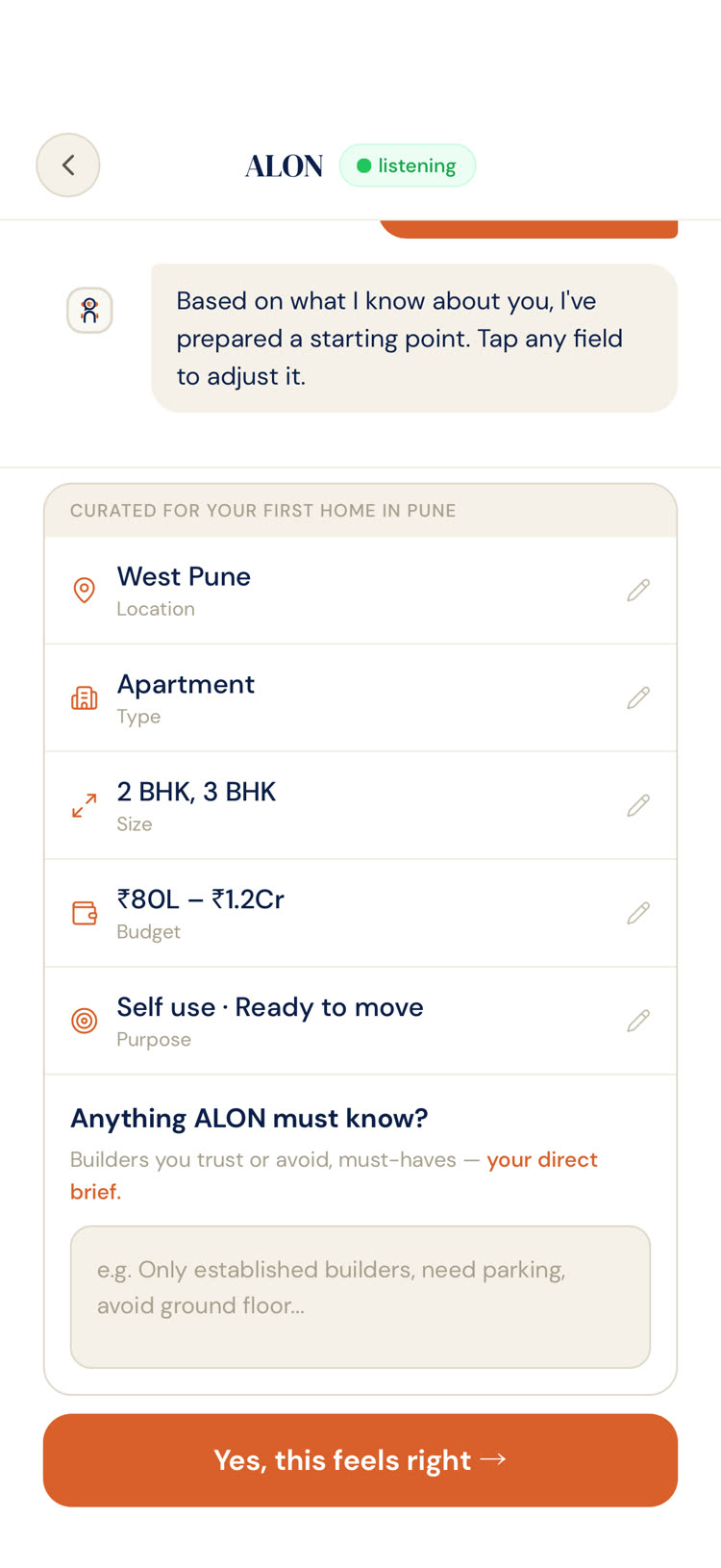

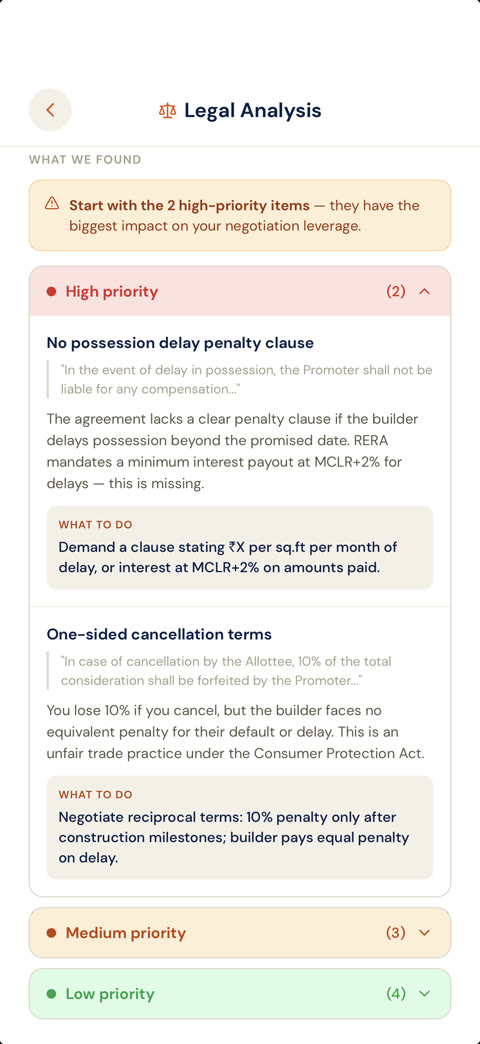

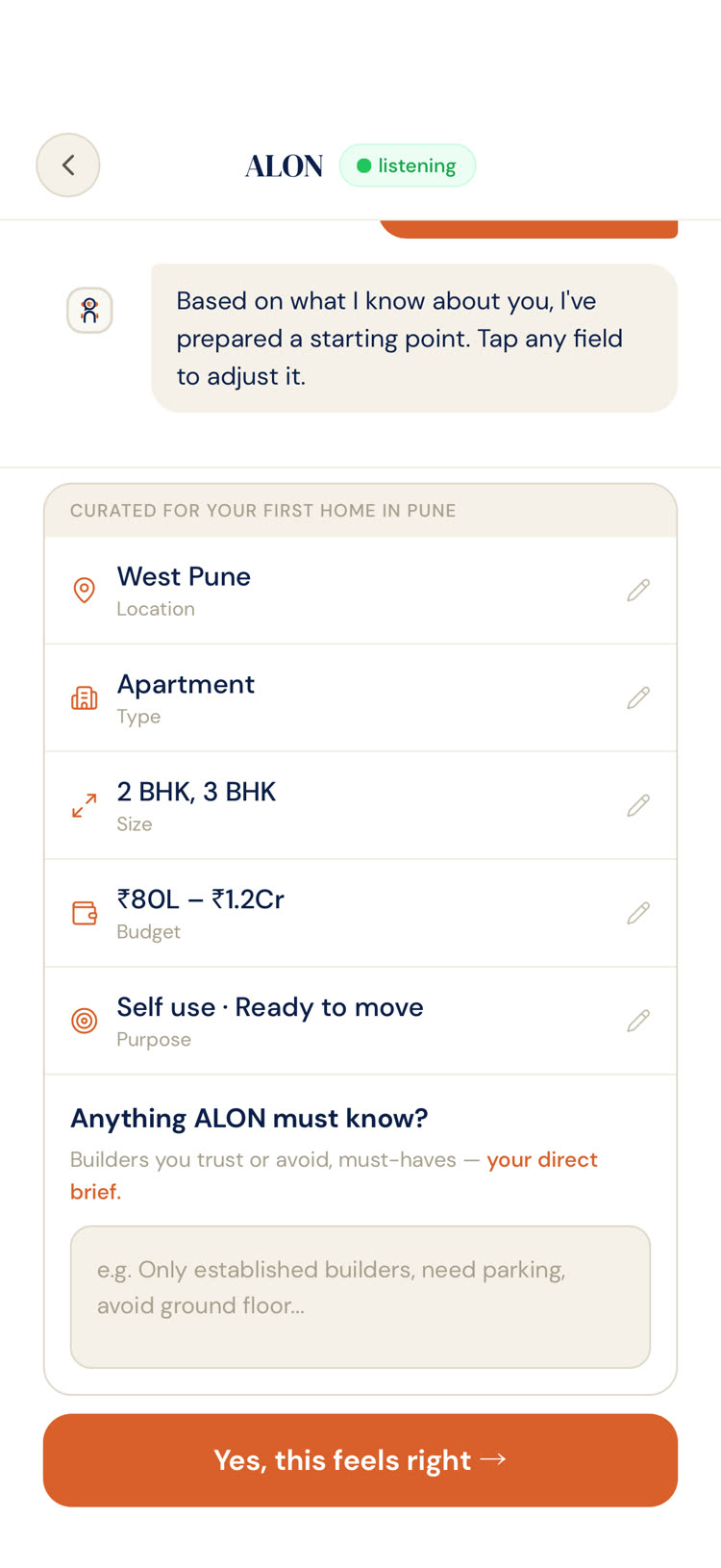

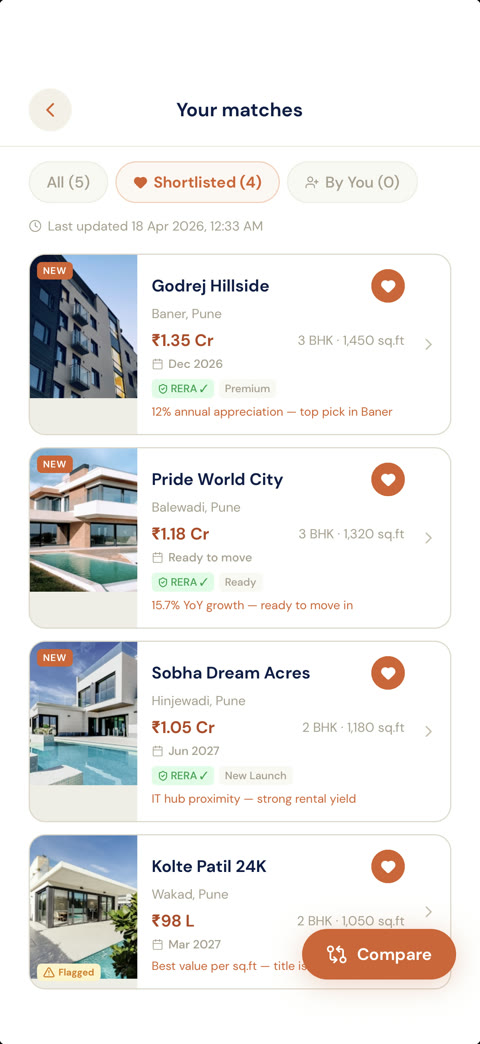

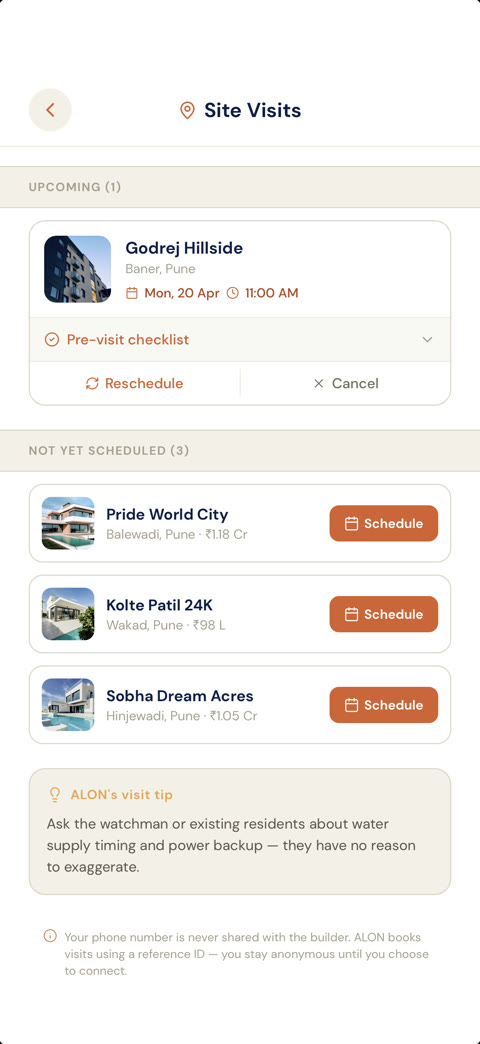

A nine-stage journey from search to possession, rendered as an expandable accordion on a single dashboard. Stage-aware chat that changes its suggested prompts based on where you are in the journey and what it already knows about you. Voice-first onboarding for property briefs, so people describe what they want in their own words instead of navigating dropdowns. Pull-down gesture to collapse a full-screen chat back into the dashboard. Skeleton loaders at every async boundary because real APIs take real time and silence reads as broken.

- Dashboard with journey accordion, stage navigator, and pinned chat bar that expands full-screen on tap

- Voice onboarding with animated field glows — location, budget, timeline, purpose, size, type — each lighting up as ALON extracts it from the transcript

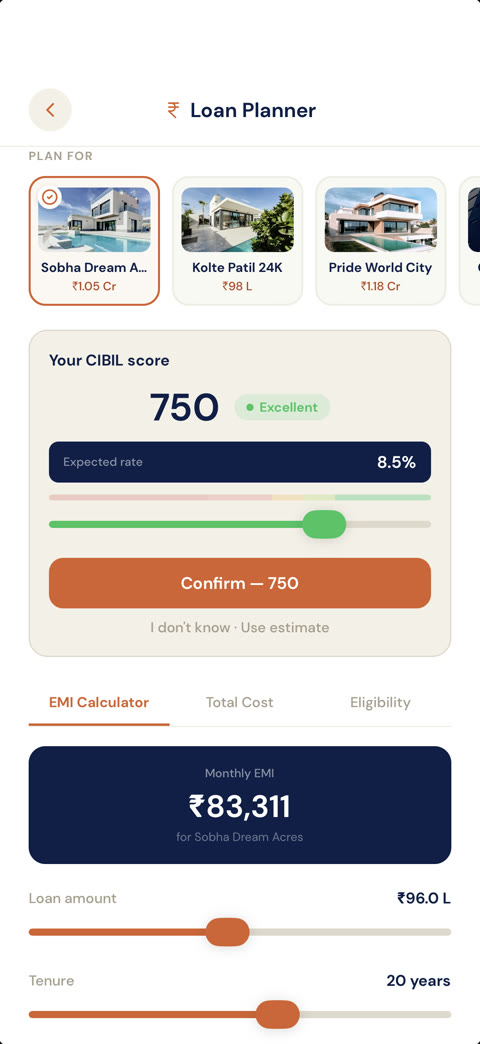

- Finance toolkit inside chat: EMI calculator, eligibility scoring, rate tiering by CIBIL, cost breakdowns rendered as cards in the conversation

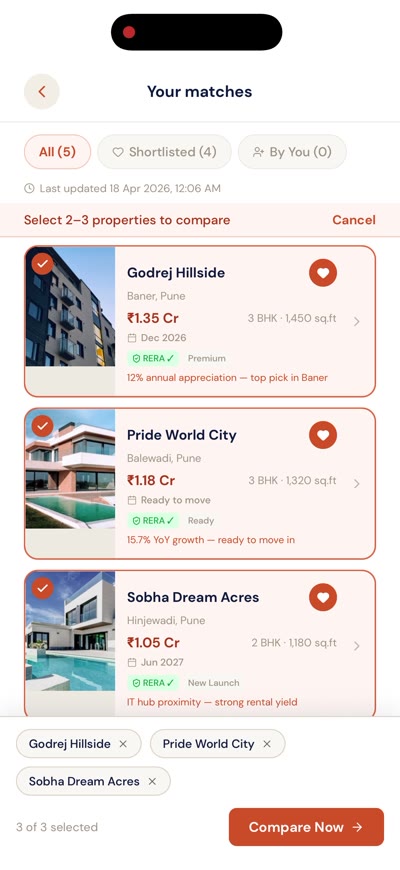

- Comparison scoring across seven factors (price per sqft, ROI, builder trust, appreciation, convenience, safety, fit) with ALON's recommended pick

- Code-only design system: warm neutrals, navy for institutional depth, terracotta as brand accent, DM Serif Display + DM Sans

Key Screens

My role

Soledesigner.Iownedtheend-to-end:flows,IA,visualsystem,animation,andtheReactNativeimplementationthatdeveloperspulledfrom.Iwasn'twritingproductionbackendcode—devsstillownedtheAPIlayer,data,anddeployment—buteverypixelthatshippedcameoutofarepoIwascommittingtodirectly.ThePMshapedscopeandkeptushonestaboutwhat'readyforbeta'meant.TheQAengineerjoinedthedesignphase,notthetestphase,becausetheprototyperanonrealdevicesfromdayone.Wecaughtgesturebugs,contrastissues,andlayoutbreakageondifferentscreensizeswhilethedesignwasstillbeingdecided,insteadofinaticketbacklogafterhandoff.

What happened

ALON is in internal beta inside Trythat.ai. Stakeholder reviews happened on a handheld device, not a wall-projected Figma frame, and that changed the nature of the feedback. The CEO held the app, used it, and told the team to match everything one-to-one in production. The design system being code-native meant that directive was actually possible to honour — developers imported the same tokens and the same animated logo component I'd been iterating on, rather than interpreting a spec. The QA engineer catching gesture and edge-case issues early meant the transition from internal beta to a wider rollout has far fewer surprises waiting in it.

First demo

CEO approval

20+

Screens shipped

~1 month

Design to beta

Zero

Dev handoff rework

08

Reflection

TwothingschangedhowIthinkaboutdesignafterthisproject. First,emptystatesaren'tanafterthought.Halfthetimeauserspendsinanappisonascreenwithnothingonityet—firstrun,zeroshortlist,noresults,nomessages,nonetwork.Ifyouonlydesignthepopulatedstateyou'vedesignedhalftheproduct.Icaughtmyselfmissingthesescreensonthefirstpassofalmosteveryfeature. Second,latencyisadesignmaterial.Skeletonloadersaren'tpolish,they'rethedifferencebetweenanappthatfeelsfastandonethatfeelsbroken.IneverthoughtaboutthisseriouslyuntilIwastheonewiringmockdataintotheUIandwatchinganemptyscreensitthereforeighthundredmilliseconds. Thebiggerlessonisaboutwherethedesignlives.Figmaisagreattool,butthemomentIhandedtheCEOmyphonewithaworkingbuild,everyreviewgotmoreconcrete.Feedbackshiftedfrom'movethattwelvepixelsleft'to'thisgesturefeelswrong'.TheAI-nativepartofthisprojectmatterslessthanpeoplethink.Therealshiftwasdesigninginthesamematerialtheproductshipsin.